MetaAudio System 1

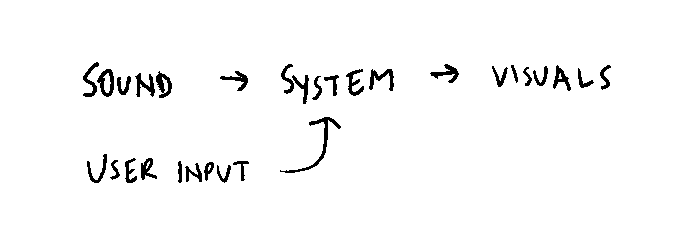

Audio-powered computational systems listen to sound, and change parameters in real time according to input audio. Audio visualizations are great examples: in this Saber visualization, the audio volume influences how large each colored bar appears, as well as how much it rotates once it does appear.

It’s also possible to create computational systems that generate audio. In As Space Expanded, the Universe Cooled the motion of planets according to Newton’s gravitational laws are translated to pitches and reverb effects. In Bounce, the collision of balls with the ground trigger different notes. Most game sound effects could be thought of as simple audio-generating systems.

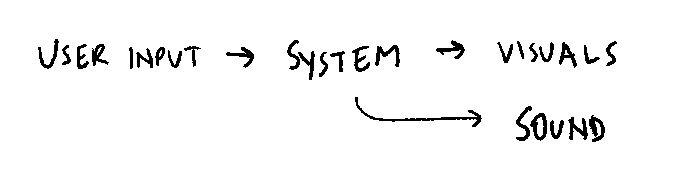

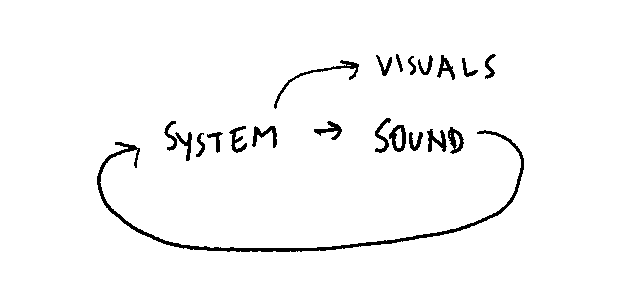

MetaAudio System 1 combines these two types of systems to create something that listens to itself to generate sound. It is a composition that generates itself, a computational system that is affected by audio input but generates audio output, creating a real-time musical dialogue.

A point mass, represented by a black sphere, travels through space. The speed and angle of its travel dictate the pitch and various audio filter effects of a software instrument. The output audio of this software instrument is monitored, and its real-time frequency spectrum deforms the gravitational field of space. For instance, as lower frequencies take hold, the gravitational density of the upper-left region of space increases. The point mass is drawn toward that gravitational density; as it changes direction and accelerates, the note being played changes too. As the notes change the gravitational fields also change, again changing the direction and acceleration of the point mass, and so on and on in an endless celestial dance.

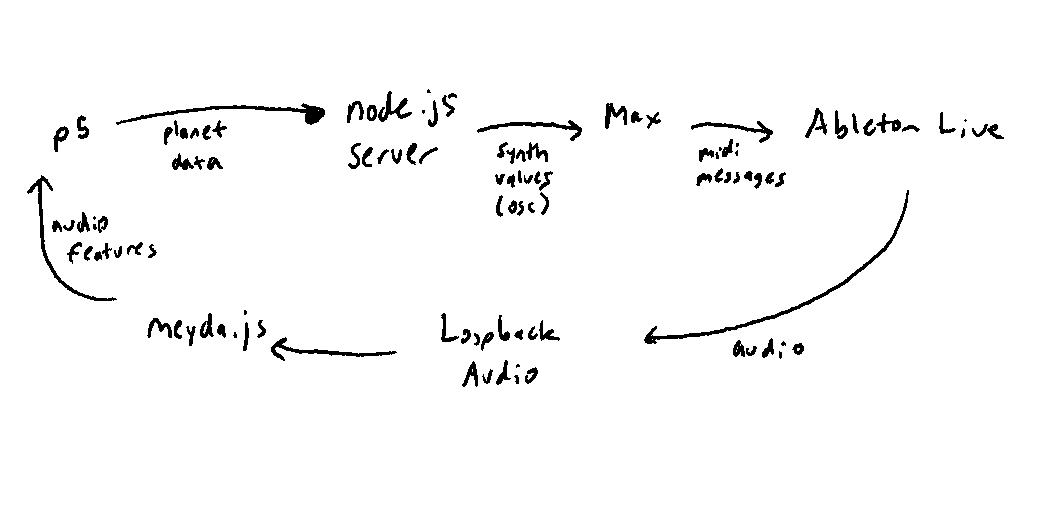

This project was created with Ableton Live, Max/MSP, node.js, meyda.js, Loopback Audio, and p5.js. View the code on github here.